We have entered the intelligent age: a period in which software doesn’t just store and move information, but can interpret it, generate it, and increasingly act on it. That shift will reshape how we work, how value is created, and how societies organise themselves, much like industrialisation or the rise of the internet did in earlier eras.

If that sounds dramatic, it’s because the change is structural. AI isn’t a single product trend. It is a new layer of capability that will seep into nearly every industry and function, from customer support and marketing to logistics, software development, legal work, education, healthcare, and public administration. Some applications will feel mundane, like better search or faster document handling. Others will be transformational, such as systems that plan, decide, and execute tasks across tools.

And yes, there’s tension. That’s normal when the ground shifts.

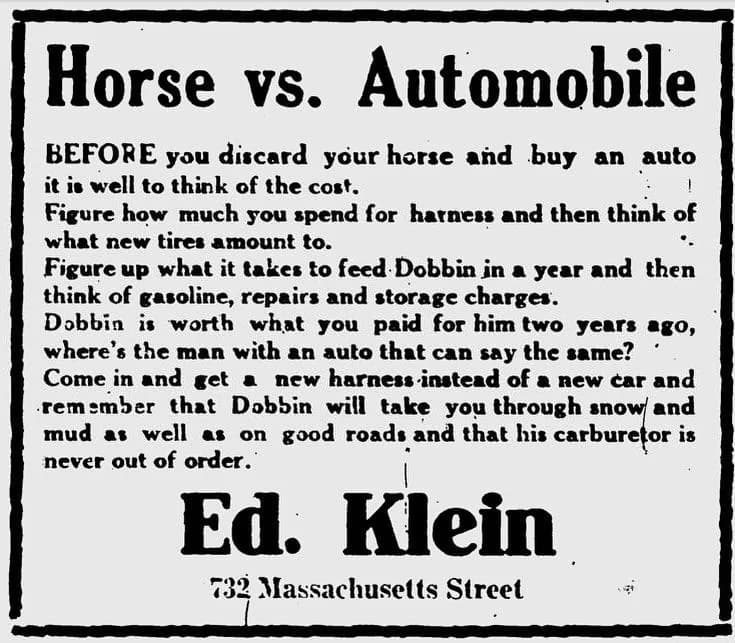

The horse sellers and the cars

Whenever a new technology threatens existing models, the first reaction is often denial wrapped in confidence.

Picture the early days of automobiles becoming accessible beyond hobbyists and the ultra-wealthy. Imagine a group of horse sellers watching this noisy, unreliable machine rattle down the road. It’s easy to hear the arguments. Cars break down. Cars are unsafe. Cars are only for rich people. A good horse is proven.

Some of those objections would even be true at first. Early cars were messy. Roads were poor. Standards weren’t established. The technology was immature.

But the mistake wouldn’t be noticing the flaws. The mistake would be assuming those flaws were permanent, and that the world would remain organised around the existing system.

That’s the risk today: not scepticism, but stagnation. The people and organisations that treat AI as a fad may end up optimising for a world that is already changing.

Yes, there’s hype. No, that doesn’t make AI optional.

It’s impossible to talk about AI honestly without acknowledging the hype. We are in an early phase where standards are still forming, best practices are uneven, and “AI strategy” is sometimes more storytelling than substance. Markets also tend to reward bold promises faster than careful execution, which can make the whole space feel noisier than it needs to be.

That’s exactly why some dismiss the entire field. But hype and reality can both be true at the same time.

A useful historical parallel is the early internet era. There was a bubble, and there were crashes, but the underlying infrastructure kept improving and ultimately became foundational. You can believe we’re early, believe some things are overhyped, and still recognise that the core capabilities are real and compounding.

Resistance is human and it’s not the end of the story

Lately, a recognisable anti-AI sentiment has started to take shape. It often bundles several concerns into one, including talk of an “AI bubble,” frustration with overhyped marketing, and very real fears about jobs, trust, and social stability. The result is a posture that treats AI mainly as a threat or as something to discredit, because it’s easier to focus on what can go wrong than to engage with a technology that feels uncertain and fast-moving.

When uncertainty rises, people look for stability. When livelihoods and identity feel threatened, fear and rejection are predictable responses. History is full of examples of communities pushing back against mechanisation and automation, because the consequences were immediate while the benefits felt distant, theoretical, or uneven.

It’s worth saying clearly: fear isn’t ignorance. Often it’s a signal that change is happening faster than our institutions and habits can comfortably absorb.

This is also where the horse-and-car analogy becomes more than a rhetorical flourish. Early cars were noisy, unreliable, and easy to mock, so it was tempting for the “horse world” to treat them as a passing fad. But history didn’t just show that cars won. It showed why they won. Cars democratised mobility at a scale horses never could, and they unlocked a level of travel and logistics that became foundational to modern life. Even before you get to today’s global economy, large cities were already running into very practical limits of horse-based transport, including sanitation, space, and sheer throughput. Horses simply couldn’t support the scale of a growing, urbanising world.

A similar pattern shows up in the Swing Riots in England around 1830, which later echoed across parts of Europe. These protests were driven by genuine hardship and anxiety as new machines threatened jobs and wages. The reaction wasn’t irrational. It was human. But from today’s perspective, it’s also clear that industrialisation became a net positive for society. We could not feed and supply modern populations at modern standards of living without mechanised production, logistics, and the productivity gains that followed. The lesson isn’t that people should have accepted it without question. The lesson is that major technological shifts create real disruption, and the right response is not denial. It is guiding the transition so that the benefits are widely shared.

So the goal shouldn’t be to “win” a debate about AI. The goal should be to adapt with agency, to understand the technology, shape how it’s deployed, and build the norms, tools, and safeguards that allow society to capture the upside without sleepwalking into avoidable downsides.

A more useful question: how do we respond well?

From here, the most productive stance is neither hype nor despair. It’s pragmatic optimism, which is the belief that AI will be a net positive if we build and use it thoughtfully.

That response starts with learning the real capabilities and the real limits. AI is already valuable for everyday work such as drafting, summarising, translating, brainstorming, coding assistance, and searching across large volumes of text. At the same time, it can be confidently wrong, it can miss nuance, and it can produce plausible outputs without reliable grounding. The answer isn’t to demand perfection before adoption. It’s to develop better judgement about what can be accelerated safely, what needs verification, and where humans must remain accountable.

The second part is focusing on workflows rather than demos. AI value rarely comes from a flashy prompt screenshot. It comes from being integrated into real processes so that cycle times drop, clarity improves, and knowledge becomes easier to access. In many organisations, transformation begins quietly. A few minutes saved here, a repeated friction removed there, and suddenly entire teams move faster without feeling like they “changed everything.”

The third part is choosing tools that increase agency through transparency and control. As AI becomes more embedded in work and private life, novelty matters less than trust. Trust grows when you can see which model produced an output, compare behaviour across providers, and configure systems intentionally. It also grows when you can understand cost and latency trade-offs, apply clear constraints, and make sensible decisions about data handling. There won’t be “one AI” that fits all contexts. There will be many models with different strengths, failure modes, and incentives. The organisations that do best, technically and ethically, will treat AI as an engineering discipline rather than a magic layer. That means auditability, predictable configuration, and clear guardrails.

This is also where I think the conversation needs to become more concrete. “Responsible AI” often gets discussed as a set of abstract principles. Those principles matter, but they only become real when responsibility shows up in how products behave, in transparency, in configurability, and in systems that help people make informed choices rather than hiding complexity behind vague marketing.

Why you should be optimistic

With all the noise, it’s easy to overlook the upside. But the upside is enormous.

AI can make expertise more accessible and lower the barrier to doing high-quality work. It can help small teams operate with the leverage that once required large organisations. It can reduce administrative overload in systems that are already strained, and it can accelerate research and engineering cycles by turning rough ideas into workable drafts faster. It can also support creativity and learning at scale, especially when it’s designed to encourage curiosity rather than dependency.

The biggest shift may be cultural. We’ll stop thinking of “intelligence” as something only humans provide, and start thinking of it as something we can collaborate with while keeping humans responsible for goals, values, and consequences. That last part is crucial, because AI will amplify what we ask of it. The future depends on what we choose to optimise: speed or quality, persuasion or understanding, short-term gains or long-term trust.

A simple takeaway

AI is here to stay. That isn’t a threat. It’s a reality. And realities can be shaped.

The best way forward is to stay curious, learn the tool deeply enough to evaluate it, demand transparency from the systems you rely on, and build workflows where humans remain accountable.

The intelligent age won’t reward the loudest opinions. It will reward people and organisations that combine competence with responsibility.

That’s a future worth building.